This blog is written by Poojita from Avanti Fellows. Team Avanti Fellows has been mentored and guided by Akhilesh from Project Tech4dev.

Finding the Right Problem

Since my last blog, our plans have changed quite a bit (to say the least xP). We started by revising our multi-factor recommendation engine – moving from rigid buckets to a weighted scoring model, but we realized it was still in the research phase with too many unanswered questions, wasn’t really an “AI” project for the cohort and most importantly – we couldn’t experiment lightly with something directly affecting student outcomes.

We spent quite a bit of time after this trying to identify the right AI use case within the org. After extensive discussions with T4D (Ashana and Lobo) and our co-CEO (Akshay), we pivoted to an existing product needing efficiency: mentorship summaries.

The Life of a Mentor & Mentee

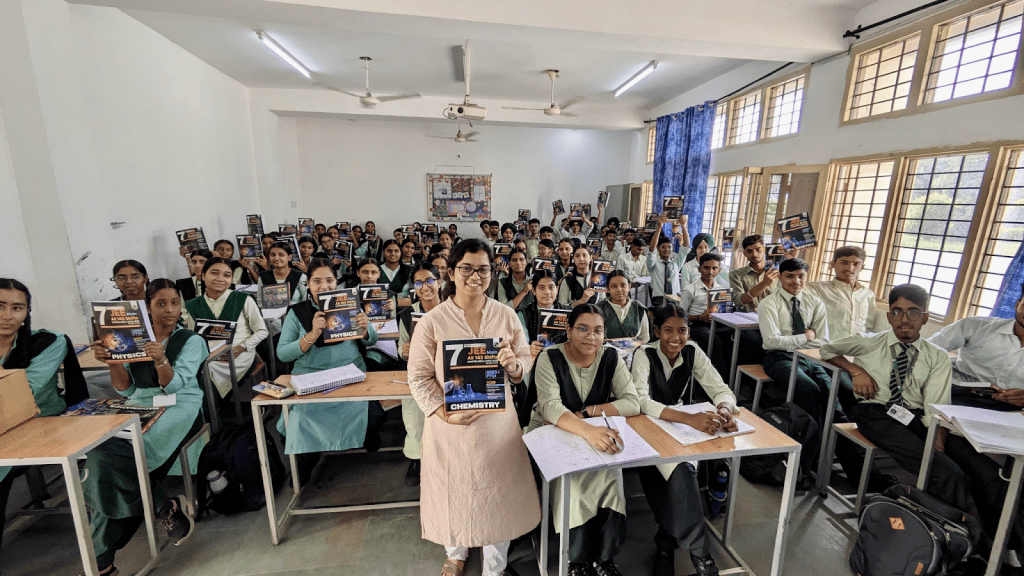

Meet Khushboo – eldest of 6 children, preparing for the JEE Mains exam. She will be the first in her family to get a college degree. She is one of our most diligent students, never missing a test and always reviewing every report. She sees her chapter-wise performance but doesn’t know what to prioritise or what strategy to follow.

Meet Bhumika ma’am, her mentor, who teaches 4+ classes every day and still finds time to check in with each mentee. To give Khushboo the direction she needs, Bhumika has to dig through multiple test reports, old notes, category cutoffs and upcoming test syllabus. Multiply this by ten or twelve students and “personalized” mentorship becomes overwhelming and impractical.

Problem Statement: How do we provide teachers with personalized insights for their students without adding to their workload?

Our Hypothesis

If we can give teachers an accurate, concise, AI-generated summary of each student’s key performance trends, they can jump straight to strategic, personalized mentorship – spending more time coaching, less time on the pre-work needed and data-digging.

What We Built

We started with something quick and dirty → fed Claude with student test data and previous mentorship notes to see what analysis could be generated.

Since then, we’ve done extensive prompt engineering and experimented with different structures and strategies to emphasize on. These outputs were evaluated by 2 expert teachers and 4 reviewers across our in-house curriculum and program teams on accuracy, clarity, length, missing content, relevance, and hallucinations. We then synthesised the detailed feedback received and prioritised it using a Frequency × Severity scoring.

Key Findings

| Theme | Score | Quotes (Feedback from Reviewers) | Action Taken |

| Clarity: Tone seems slightly negative because of frequent usage of the word “backfired” | 12 | Words like ‘backfired’, ‘unbalanced’ can be avoided | Added to the list of words to avoid |

| Strategy: Medium/ Low Priority chapters should not always be skipped | 6 | …(better to skip these for now) should be avoided. This will convey a wrong message to students | Rephrased guidance to probe the mentor to find out reason for low performance in these chapters. If it’s a knowledge gap, only then to discuss to skip preparing for these and focus on HP chapters. |

| Missing content: Rationale for chapter-level recommendations | 6 | For all chapters, reason could be mentioned sidewise maybe in a tabular form | Reformatted to a tabular structure with a performance trend column to compare across tests |

| Hallucinations: Imaginary timelines and factual fabrications at a chapter level | 6 | How was it decided that next test is after 2 weeks? Advice for chemical bonding seemed made up. | Added more data grounding rules → in addition to Boundaries section, also added examples of what not to do in individual sections |

Prompt Evolution (V4: Prototype → V7: Pilot)

| Dimension | V4 Snippets | V7 Change | V7 Snippets |

| Tone | “When the data shows a clear strategic error, address it directly… not sugar-coating reality.” | Shifted to firm but empathetic tone; banned judgmental words. | “You only use empathetic language even though you are direct. Avoid words like ‘backfired’, ‘red flag’.” |

| Priority Strategy Logic | “Medium and low priority chapters with poor accuracy can be skipped entirely.” | Added conditional skipping logic (only if knowledge gap) | “If it is a knowledge gap, suggest skipping studying this chapter for now.”“If a careless mistake, ask them to review using Mark for Review.” |

| Data Grounding | Improvements stated without test reference | Mandatory test attribution (current / previous / average) | “Always specify whether data is from the current test, previous test or average.” |

| Performance Pattern Table | Inconsistent descriptions | Introduced rule-based pattern logic | “If the chapter was not present in previous tests→ ‘New chapter’.”“If first attempt → ‘First attempt; correct/incorrect’.”“If attempted in both → describe score change (‘+8 → -1’).” |

| Guidance Quality | Overstated wins (“brave to attempt more questions”) | Tightened criteria: highlight only major wins. Avoid misleading praise. | “Do not overstate wins… Avoid assuming confidence. Do not call them ‘brave’ if attempting more questions led to lower accuracy.” |

| Assumption Boundaries | Occasional general claims – example “this is a foundation chapter” | No assumptions allowed; strictly data-based | “Avoid phrases like ‘foundation chapter’. This information is NOT provided to you.” “Do not make any comments on the topic or question priority as this is not information provided to you.” “Do not make any assumptions on which is a strong chapter for the student unless it’s provided in the mentorship notes.” “Always ensure that everything you’re stating is grounded on data provided.” |

We built a tool that now pulls together student profile (gender, name, category), historic test performance, mentorship notes and the latest results to create a quick snapshot with recommendations on what to discuss with the student and next steps so mentors can prep quickly and offer more focused support.

We’re currently in the piloting process with 6 mentors, across schools in the Punjab residential program.

Demo: https://drive.google.com/file/d/14Sm7ZXlZdhIwfPUdWST4wTws7pTnXujg/view

Link to Live Solution: https://ai-mentorship-guide.avantifellows.org/test-it-out (Try ID: 07260747)

When Pilots Don’t Really Go According to Plan

We oriented all the teachers and kicked off the pilot. They committed to test 60 summaries in 10 days. On the 9th day, we realised that 0 sessions had happened.

Turns out, the program head used the summaries, drew performance patterns and got strict about expectations. Students panicked and bombarded teachers for doubt-solving and revisions – leading to no bandwidth left for mentorship sessions xP

While this derailed our “product” pilot, there’s an interesting silver lining. The tool itself triggered a spillover effect – students are now organically seeking out mentorship and guidance, even if not in the structured way we planned. Since this demand is coming from students themselves rather than being pushed top-down, it’s getting prioritized by teachers now (and rightly so).

Not the pilot we designed, but it’s showing students actually want this kind of support!

What’s Next

Phase 1 – Build a robust evaluation layer: Introduce structured evaluation before teacher delivery to systematically improve reliability, reduce errors and move beyond a single raw LLM output.

Phase 2 – Scale across programs: Expand from 2 pilot schools to ~30 residential schools across our other two programs while maintaining quality and contextual accuracy.

What Helped and What We’re Looking Forward To

The cohort has been incredibly valuable in helping us think through what’s feasible on both tech and behavior sides and forcing us to focus on where we can actually make an impact.

One area we’re excited to explore more in future sessions is the on-ground implementation of AI tools beyond just building and evaluating them. In the nonprofit space, so much depends on operational co-ordination across teams, often outside the product team’s control. It would be great to learn from other organizations about:

- Proactive strategies to prevent implementation roadblocks specific to AI adoption

- Proven approaches to drive consistent AI tool adoption (not just one-time trials)

Looking forward to diving deeper into the messy, real-world parts of making AI tools actually work!