Three organizations apart from Tech4Dev gathered at the Udhyam office in central Bangalore on December 3rd to talk about a shared challenge. The representatives from The Apprentice Project, Udhyam Learning Foundation, and Inqui-Lab Foundation all had the same problem: they needed to evaluate thousands of student submissions, but they didn’t have enough teachers or volunteers to do it fairly and consistently.

What We Learned from Our Partners

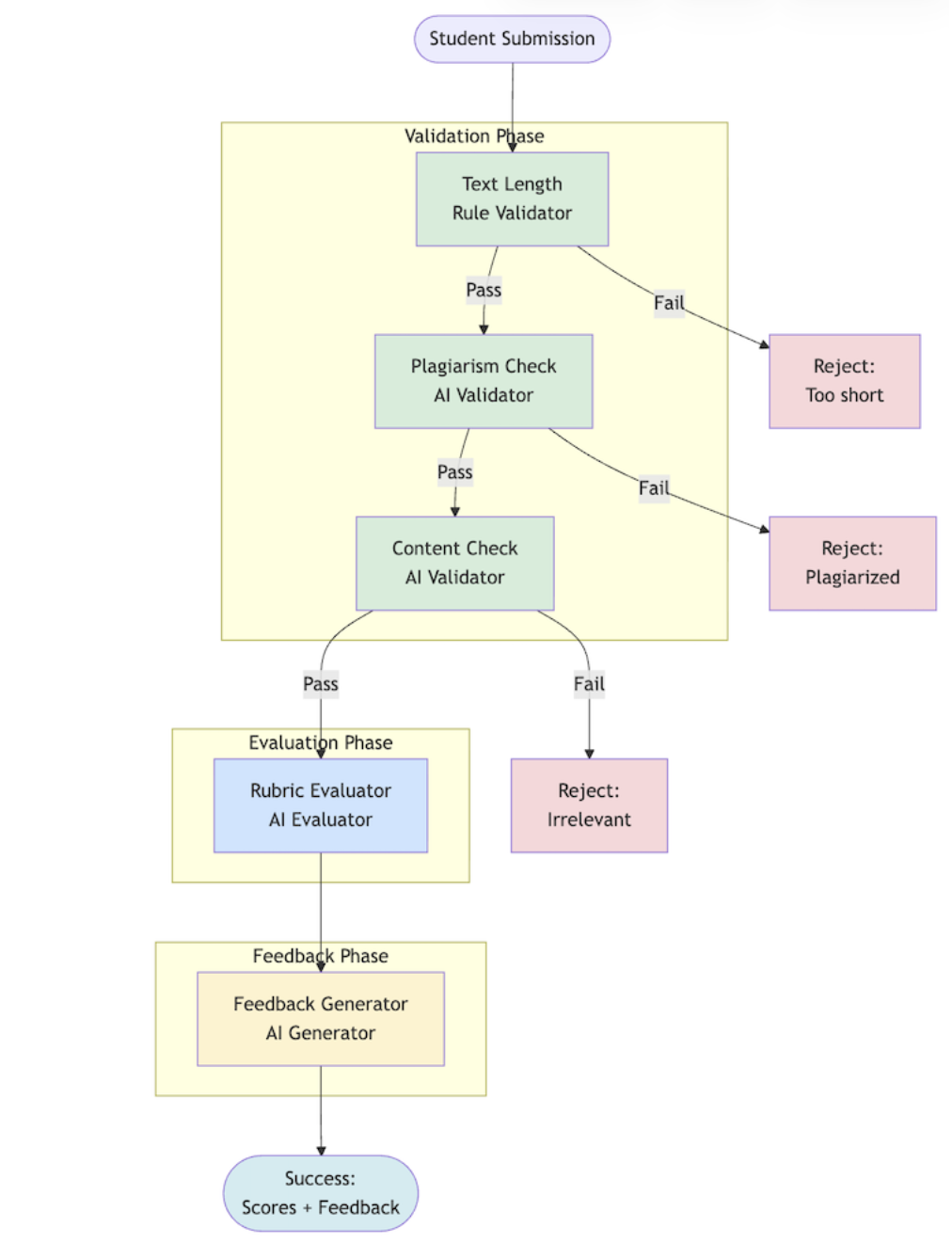

In our meeting, we realized something important: despite working in different domains (entrepreneurship, social-emotional learning, innovation), all three organizations followed a similar pattern when evaluating submissions.

First, they validate. Is the submission in the right format? Does it contain inappropriate content? Is it copied from someone else’s work? These quick checks filter out invalid submissions before spending time on detailed evaluation.

Second, they evaluate. Apply the rubric. Score the work. Determine if it meets expectations. This is the expensive, time consuming part that requires expertise and consistency.

Third, they provide feedback. Students don’t just need a score – they need to understand what they did well and how to improve. Good feedback is encouraging, specific, and actionable.

Each organization had built their own solutions, by virtue of having a tech team to do so. Most organizations in the social and development sector do not have the resources to maintain an internal tech team that can do this for them. We collectively brainstormed to figure if we can build a common infrastructure based off of the learnings of these three organizations.

Our Solution: Assessment Blocks You Can Build With

Imagine building with LEGO blocks. Each block does one specific thing. You can snap them together in different ways to build exactly what you need.

That’s the core idea behind the Assessment Pipeline that we plan on building. Blocks could be of three types:

Validator Blocks are the quick bouncers at the door. They filter out invalid submissions before you spend money on AI processing.

Some validators are simple rules:

- “Is this text at least 10 words long?”

- “Is this video between 1-5 minutes?”

- “Is this image smaller than 10MB?”

These cost nothing to run. They’re instant.

Other validators use lightweight AI models:

- “Does this text contain profanity?”

- “Is this submission copied from another student’s work?”

- “Did the student upload a selfie instead of their homework?”

These do incur a small cost, but save you from running expensive analysis on junk submissions.

Evaluator Blocks are where the real assessment happens. These use AI models to:

- Score submissions against your rubric

- Check if all required elements are present

- Compare the work to expected standards

- Generate detailed assessment data

Feedback Blocks turn cold scores into warm, encouraging messages. They take the evaluation results and create personalized feedback in simple language – whether that’s Hindi, romanized Hindi, English, or another language your students speak.

Here’s what makes this powerful: you can chain these blocks together in any order you want. And if something changes – maybe you want to add a plagiarism check, or update your rubric, you just swap out one block and keep the rest of your pipeline intact.

Example:

Students submit two business ideas. The system needs to check if the ideas are valid, evaluate them against a rubric, and give encouraging feedback in Hindi.

Block 1: Text Length Check (rule validator)

→ Rejects submissions that are too short (under 10 words) or too long (over 500 words)

Block 2: Plagiarism Check (AI validator)

→ Verifies that the two submitted ideas are actually different from each other

Block 3: Content Check (AI validator)

→ Verifies that the two submitted ideas are relevant to the assessment

Block 4: Rubric Evaluation (evaluator)

→ Scores the idea on articulation, feasibility, and alignment with program values

Block 5: Feedback Generation (feedback generator)

→ Creates encouraging feedback in simple Hindi with emojis, suitable for 16-year-olds

Total time: About 30 seconds. Compare that to human evaluation: 15 minutes per submission, with quality varying based on evaluator fatigue and subjective interpretation.

Building on What Already Works

We’re not starting from scratch. The assessment pipeline will build on infrastructure we’ve already created on our Kaapi platform:

Our Unified API lets you work with any AI provider (OpenAI, Google, Anthropic) without getting locked into one company’s tools. If a better or cheaper model comes out tomorrow, you can switch without rebuilding your pipeline.

Our Configuration System stores your prompts and settings in a way that makes them easy to update and version control. Made a change that didn’t work? Roll back to the previous version with one click.

Our Evaluation Framework helps you test and improve your pipelines. Upload a set of submissions with known correct answers, run them through your pipeline, and see how accurate it is. This helps you refine your prompts before you process thousands of real submissions.

Join Us

We learned something important on December 3rd: when organizations share their challenges and solutions, everyone benefits. This is open infrastructure for the social sector. We’re documenting everything we learn, sharing our code, and inviting others to contribute.

If you’re working on similar challenges – whether in education, healthcare, agriculture, or any other social impact domain – we want to hear from you.